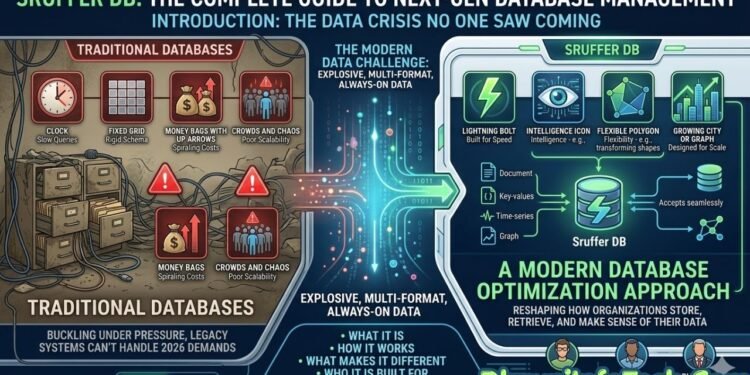

Introduction: The Data Crisis No One Saw Coming

Every business today is swimming in data. From customer transactions and IoT sensor readings to AI-generated insights and real-time analytics dashboards — the sheer volume of information flowing through modern organizations in 2026 is unlike anything seen before. And yet, most businesses are still relying on database systems that were built for a very different era.

Traditional databases are buckling under this pressure. They were never designed to handle the explosive, multi-format, always-on data demands of today’s digital landscape. Slow query times, rigid schemas, spiraling infrastructure costs, and poor scalability have become everyday frustrations for developers, data engineers, and business leaders alike.

This is where Sruffer DB enters the picture.

Sruffer DB is a modern database optimization approach that is reshaping how organizations store, retrieve, and make sense of their data. It is built for speed, intelligence, and flexibility — three things that legacy systems have long struggled to deliver at scale.

This guide covers everything someone needs to know about Sruffer DB: what it is, how it works, what makes it different, who it is built for, and how to get started. Whether someone is a seasoned developer or a business owner exploring smarter data solutions, this article has something useful for them.

What Is Sruffer DB?

At its core, Sruffer DB is a database optimization approach that improves data retrieval speed, query efficiency, and overall system performance through structured indexing and smart query design. It is not simply a new type of database engine — it is a philosophy of working with data more intelligently.

What makes Sruffer DB stand out from older systems is its fundamental shift in priorities. Traditional databases tend to focus on raw speed, scale, or transaction volume. Sruffer DB, on the other hand, prioritizes understanding. It is built around the idea that data should tell a story, not just sit in tables waiting to be queried.

This difference in mindset leads to a very different kind of system — one that is deeply aware of how data is connected, what patterns exist within it, and how it can be surfaced quickly and meaningfully when users need it most.

Who Is Sruffer DB Designed For?

Sruffer DB is built with a wide range of users in mind:

- Developers who need fast, flexible data access without dealing with rigid schema constraints

- Startups that need scalable infrastructure without heavy upfront costs

- Enterprises managing complex, high-volume data environments across multiple departments and regions

In short, if an organization is working with large, growing, or complex datasets and finding that current systems are slowing them down — Sruffer DB is worth taking seriously.

Core Architecture of Sruffer DB

Understanding how Sruffer DB is built helps explain why it performs the way it does. Its architecture is both elegant and practical.

Modular and Distributed by Design

Sruffer DB adopts a modular and distributed design, organized around three main layers:

- Storage Layer — Responsible for how and where data is physically held

- Processing Layer — Handles computation, indexing, and query execution

- Interface Management Layer — Manages how users and applications interact with the data

Each of these layers operates independently, yet they collaborate seamlessly to deliver fast, reliable results. This modular approach means that one layer can be upgraded or scaled without disrupting the others — a major advantage over monolithic legacy systems.

Cellular Data Logic Model

Rather than storing data in conventional rows or documents, Sruffer DB employs a distributed, cellular data logic model where information is stored in small, efficient clusters. Think of it like organizing a library not by shelving every book in one massive room, but by distributing them across multiple smaller, topic-specific rooms — each optimized for quick access.

This approach dramatically reduces retrieval time and allows the system to handle queries that would bog down a traditional database.

Schema-on-Read Flexibility

One of the most developer-friendly features of Sruffer DB’s architecture is its Schema-on-Read model. Instead of requiring a strict, predefined schema before data can be loaded, Sruffer DB applies structure at the time of reading. This gives agile teams the freedom to ingest raw or semi-structured data without lengthy setup processes, and iterate on schema design as their understanding of the data evolves.

Hybrid Cloud Compatibility

Sruffer DB is built to work in hybrid cloud environments, making it easy for organizations to run workloads across on-premise infrastructure and public cloud platforms simultaneously. This flexibility is essential for businesses navigating complex compliance requirements or gradually migrating from legacy systems.

Key Features of Sruffer DB

Sruffer DB comes packed with features that directly address the limitations of traditional database systems. Here is a breakdown of the most important ones.

Real-Time Analytics

One of Sruffer DB’s most powerful capabilities is its support for real-time analytics. It enables businesses to make decisions based on live data rather than outdated reports, allowing users to visualize trends the moment they emerge. In industries where timing is everything — finance, logistics, healthcare — this feature alone can be a game-changer.

Advanced Indexing

Sruffer DB’s advanced indexing techniques enhance search speed and accuracy, making it significantly easier to find specific datasets among massive volumes of information. Unlike traditional indexing methods that can become sluggish at scale, Sruffer DB’s indexing is optimized for high-cardinality data and complex query patterns.

Elastic Auto-Scaling

Traffic spikes are unpredictable. With Sruffer DB’s elastic auto-scaling, system resources automatically expand as demand increases — without any interruption to performance. When usage drops off, the system scales back down to optimize costs. This “pay for what you use” elasticity is ideal for businesses with fluctuating data workloads.

Security and Encryption

Data security is non-negotiable in 2026, and Sruffer DB takes it seriously. It uses advanced encryption protocols to protect data both at rest and in transit. Additionally, role-based access controls ensure that sensitive information is only accessible to those who genuinely need it, reducing the risk of internal breaches and unauthorized access.

Seamless Integrations

Sruffer DB is designed to integrate rather than replace existing tools. It acts as a connective layer, pulling structured and semi-structured data from various sources into a coherent, unified whole. Whether an organization is using CRM platforms, analytics tools, or custom-built applications, Sruffer DB fits into the existing ecosystem without forcing a complete overhaul.

Sruffer DB vs. Traditional Databases

It is worth taking a closer look at how Sruffer DB stacks up against the legacy systems that most organizations are still using today.

The Pain Points of Legacy Systems

Traditional databases were built for a world where data was simpler, slower, and more predictable. Today, they fall short in several key areas:

- Rigid schemas make it difficult to adapt to changing data structures

- Vertical scaling is expensive and has a ceiling

- Slow query performance at large scale frustrates users and slows decision-making

- High maintenance overhead demands specialized teams just to keep systems running

Perhaps most significantly, traditional databases often require substantial upfront investments in hardware and licenses, plus ongoing maintenance costs for specialized personnel. For growing companies, this model simply does not scale well financially.

How Sruffer DB Changes the Equation

Sruffer DB shifts this paradigm with a more flexible, cloud-based pricing model. Users pay for what they need, which fosters a scalable approach that aligns expenses directly with growth. There is no need to over-provision for peak loads or lock into expensive long-term hardware commitments.

Side-by-Side Comparison

| Feature | Traditional Database | Sruffer DB |

|---|---|---|

| Speed | Moderate (degrades at scale) | High (optimized at all scales) |

| Cost | High upfront + maintenance | Flexible, usage-based pricing |

| Scalability | Vertical (limited) | Elastic horizontal scaling |

| Schema | Schema-on-Write (rigid) | Schema-on-Read (flexible) |

| Integration | Often siloed | Built for seamless integration |

| Real-Time Capability | Limited | Native support |

The difference is clear. Sruffer DB is not just incrementally better — it represents a fundamentally different approach to how data infrastructure should work.

Use Cases by Industry

Sruffer DB is versatile enough to deliver value across a wide range of industries. Here are some of the most compelling real-world applications.

Healthcare

In healthcare, data accuracy and security are matters of life and death. Sruffer DB enables secure patient record management, ensures regulatory compliance with data protection standards, and allows medical professionals to retrieve critical patient information quickly and reliably.

Retail and eCommerce

Speed is everything in online retail. Without proper database optimization, search results might take several frustrating seconds to load. With a Sruffer DB approach, results appear almost instantly — keeping customers engaged and reducing bounce rates. Product catalog searches, inventory updates, and order tracking all benefit from its advanced indexing and real-time capabilities.

Finance

In the financial sector, milliseconds matter. Sruffer DB supports real-time transaction processing and risk assessment, enabling financial institutions to detect fraud, process payments, and run compliance checks at a speed that legacy systems simply cannot match.

Education

Educational institutions managing thousands of student records, course enrollments, and academic outcomes benefit from Sruffer DB’s ability to deliver streamlined, accurate, and quickly accessible academic data — whether for administrative reporting or personalized learning analytics.

AI and Analytics

Perhaps the most exciting frontier is AI. Modern AI systems generate and consume enormous volumes of unstructured data. Sruffer DB handles these large-scale unstructured datasets with ease, making it an ideal backbone for consumer behavior analytics, recommendation engines, and machine learning pipelines.

How to Get Started with Sruffer DB

Getting started with Sruffer DB is more straightforward than many people expect. Here is a practical roadmap for onboarding.

Step 1: Set Up an Account

The first step is to visit the official Sruffer DB website, create an account, and explore the dashboard. Most users find that the interface is intuitive and well-documented, with tutorials available to guide newcomers through the initial setup.

Step 2: Follow Onboarding Best Practices

To make the most of the onboarding experience, it helps to:

- Define data goals early — Know what questions the data needs to answer before building schemas or pipelines

- Start small — Import a manageable dataset first to understand how queries and indexing behave before scaling up

- Involve stakeholders — Make sure that the teams who will use the data are part of the setup conversation

Step 3: Use Built-In Tools Effectively

Sruffer DB comes with a range of productivity tools that users should take full advantage of:

- Tags and categories help organize datasets and make them easier to discover

- Automated reports save time and ensure that key metrics are always up to date

- Collaboration tools allow teams to share dashboards, datasets, and insights in real time

Common Mistakes to Avoid with Sruffer DB

Even a powerful tool like Sruffer DB can underperform if it is not used correctly. Here are the most common pitfalls to watch out for.

Over-Indexing

One of the most frequent mistakes is creating too many indexes. While indexing improves read speed, too many indexes can significantly slow down write operations. It is important to index strategically — focusing on the fields and query patterns that are used most frequently.

Ignoring Query Analysis

Failing to regularly analyze query performance leads to inefficiencies that compound over time. Sruffer DB provides tools to monitor and evaluate query behavior — and ignoring these insights means missing easy optimization opportunities.

Skipping Monitoring

Without proper monitoring, issues go unnoticed until they become serious problems. Setting up automated alerts and performance dashboards from day one is a habit that pays dividends over the long run.

Over-Normalization

While normalizing data is generally a good practice, over-normalization can lead to overly complex query logic and slower performance. Finding the right balance between normalization and practical usability is key.

Neglecting Caching

Caching is one of the most effective performance tools available, yet many teams overlook it entirely. Failing to implement a solid caching strategy means that frequently accessed data is being retrieved from scratch every single time — a significant and unnecessary drain on resources.

Tips for Getting the Most Out of Sruffer DB

For those who want to go beyond the basics, here are some proven strategies for maximizing the value of Sruffer DB.

Master the Querying System

It pays to fully understand Sruffer DB’s querying capabilities and data structures. The more fluent someone becomes in writing optimized queries, the faster and more reliably the system will perform. Take time to explore advanced query features beyond basic retrieval.

Keep Data Fresh

Data that is stale or inaccurate undermines everything that Sruffer DB is built to deliver. Regularly updating data to ensure accuracy — and setting up automated data refresh pipelines where possible — keeps the system running on a foundation of reliable information.

Leverage Real-Time Collaboration

One of Sruffer DB’s underutilized strengths is its real-time sharing and collaboration features. Teams that actively use these capabilities to share dashboards and datasets tend to make faster, better-aligned decisions.

Combine Indexing with Caching

For maximum performance gains, it is worth combining smart indexing strategies with a robust caching layer. These two approaches complement each other well — indexing speeds up initial retrieval, while caching ensures that repeated requests are handled with minimal overhead.

Engage with the Community

Sruffer DB has a growing community of users, developers, and data engineers. Joining forums, user groups, and developer communities is one of the best ways to stay current with best practices, discover tips from experienced users, and get help when challenges arise.

Conclusion: Why Sruffer DB Matters in 2026

The way the world works with data has changed permanently. The volume, velocity, and variety of data that organizations need to manage in 2026 demands tools that are faster, smarter, and more adaptable than what most companies currently have in place.

Sruffer DB is not just a buzzword — it is a practical, proven approach to making databases faster, smarter, and more scalable. From its modular architecture and real-time analytics to its elastic scaling and seamless integration capabilities, it addresses the real pain points that businesses face every day.

Whether someone is a developer tired of fighting with legacy systems, a startup looking to build a solid data foundation from day one, or an enterprise seeking to modernize without chaos — Sruffer DB offers a compelling path forward.

The next step is simple: explore, implement, and optimize. The tools are there. The architecture is ready. The only question is how soon the leap gets made.

Also Read: 2rsb9053 Meaning, Structure, and Growing Interest